Technology has always chased the magic of the human voice. A voice is not just sound. It is warmth, rhythm, hesitation, excitement and memory stitched into vibration. To understand how machines have learned to speak is to imagine a sculptor working with air instead of stone. They chip away silence, refine tone, and tune emotion until synthetic breath feels like something alive. The story of AI speech generation is not only about clearer pronunciation. It is about teaching machines to carry feelings.

The Mechanical Murmur: When Voices Were Wires

In the early days, machine speech resembled a wind-up toy trying to read poetry. Robotic monotones called out bus station announcements and customer support menu options. These voices were functional, rigid and hollow. They were designed to transmit information, not emotion.

Imagine listening to someone who has never felt joy or sorrow. You hear every word, but nothing lands. That was the first era of synthetic speech. The machine could mimic the shape of language, but not its soul. Engineers worked tirelessly to refine tone, pace and clarity. Yet the voice still sounded distant, as if spoken from a cold corridor lined with metal.

Then the field shifted. The machine voice started learning not only what to say but how to say it.

Teaching Machines to Breathe: Neural Speech Models

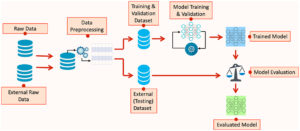

A breakthrough arrived when researchers treated speech not as a string of syllables but as a living performance. Neural networks studied thousands of hours of real conversation. They absorbed how laughter lifts a word, how grief lowers a sentence, how surprise bends tone upward like a question mark floating in air.

During this period, companies and professionals began to seek structured learning experiences to understand the shift in synthetic voice generation. Some learners choose immersive programs such as gen ai training in Hyderabad to explore how neural techniques are reshaping communication. Through such training, they gain exposure to how speech models replicate breath, pause and resonance.

The machine voice began to smooth its edges. It learned to take tiny pauses, those moments of reflection and emphasis that make speech feel natural. The result was a voice that seemed less like a script and more like a conversation.

The Emotional Layer: Voices That Feel

Speech is not just information transfer. It is emotion expressed through sound. When we speak, we are always revealing how we feel, even if our words say otherwise. The newest generation of synthetic voices focuses on this hidden layer.

These systems listen for patterns of feeling. They trace how happiness stretches vowels, how nervousness compresses them, how sarcasm adds a small twist of tone. They then reflect these patterns back to the listener.

Think of a storyteller by a fireside. Their voice rises when the hero triumphs and softens in the face of loss. Modern AI voices strive to evoke that same sense of connection. They can narrate children’s stories with warmth or deliver medical instructions with gentle steadiness.

Voice actors, once the gatekeepers of emotional tone, now collaborate with models, lending their expressiveness as training material. Instead of replacing creative expression, AI sometimes becomes a powerful amplifier of human storytelling.

Identity, Ethics, and the Boundaries of Voice

When machines begin to speak with emotional authenticity, new questions surface. Who does the voice belong to? What happens when a synthetic voice can perfectly imitate a real person?

There is both hope and caution in this frontier. Synthetic voices have given speech to stroke survivors, enabled multilingual storytelling, and opened new possibilities in accessibility tools. Yet the same power could forge impersonations, deepfakes or manipulative media.

Responsible development requires transparency, consent and traceability in how voices are recorded and used. Ethical practice is not optional. It is the foundation that keeps trust intact.

Professionals exploring responsible voice technologies sometimes engage in structured learning environments such as gen ai training in Hyderabad where ethical frameworks and real-world implications are studied as carefully as the algorithms themselves.

Future voice systems may come with “watermarks” or identity flags to ensure authenticity. The conversation will likely grow, not shrink, as voice synthesis becomes more lifelike.

Conclusion: The Voice That Connects Us

The story of AI speech generation is about more than technology. It is about the human desire to be understood. A voice is the bridge between one heart and another. As machines learn to cross that bridge, they become more than tools. They become collaborators in communication.

We are not teaching machines simply to speak. We are teaching them to care how they speak. The next chapter may not be about perfect mimicry but about building voices that adapt, understand and respond.

Yet, beneath every synthetic voice lies a simple truth: the emotional meaning still comes from us. Machines may learn to echo our feelings, but we remain the source.